Several connectors in CData Arc that are used for transport include the concept of a folder structure that the files are housed in, in addition to the file itself. These include:

-

The File connector used to interact with the local disk

-

FTP and SFTP MFT connectors

-

Cloud Storage connectors that use a folder structure like S3, Azure Blob and more

While you can create multiple copies of these connectors if you wish to interact with multiple folders on the same resource, you can instead interact with multiple subfolders from a single connector. You would accomplish this by specifying the subfolder that you wish to interact with as metadata on the messages that you process – specifically via a Subfolder Header in Arc.

The Subfolder header is a specially recognized header in Arc. When it is present on an outgoing message in a supported connector, the connector will try to place files in the subfolder directory instead of directly in the parent directory.

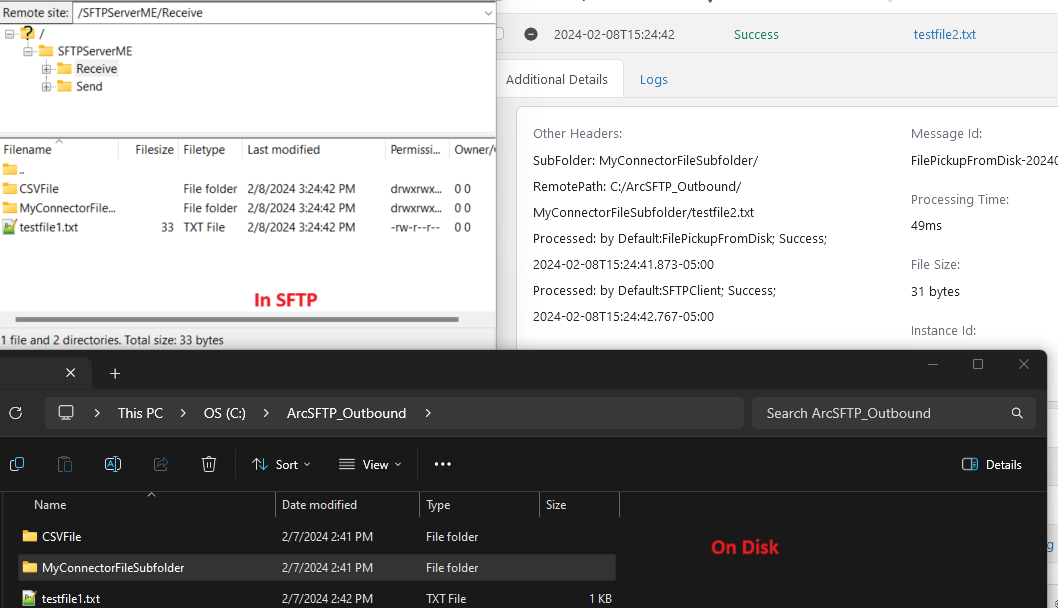

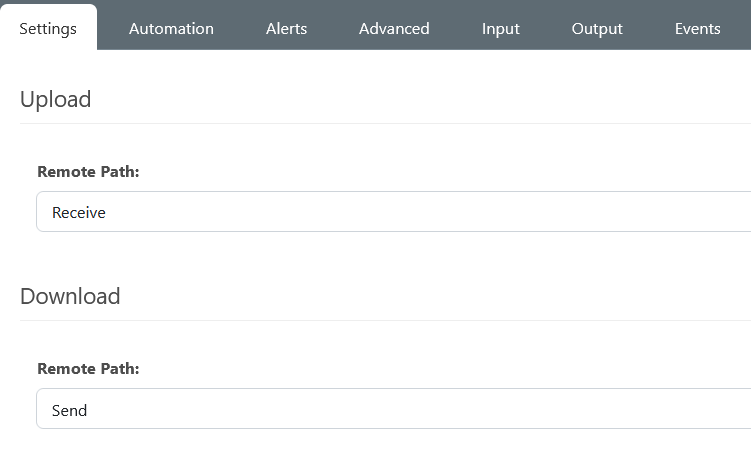

For example, when sending to an SFTP Server through the SFTP client connector, if a file has this subfolder header, the client connector will place the file in the SFTP Server subfolder specified by the header. The subfolder that the file will be placed in will be relative to the path defined for upload.

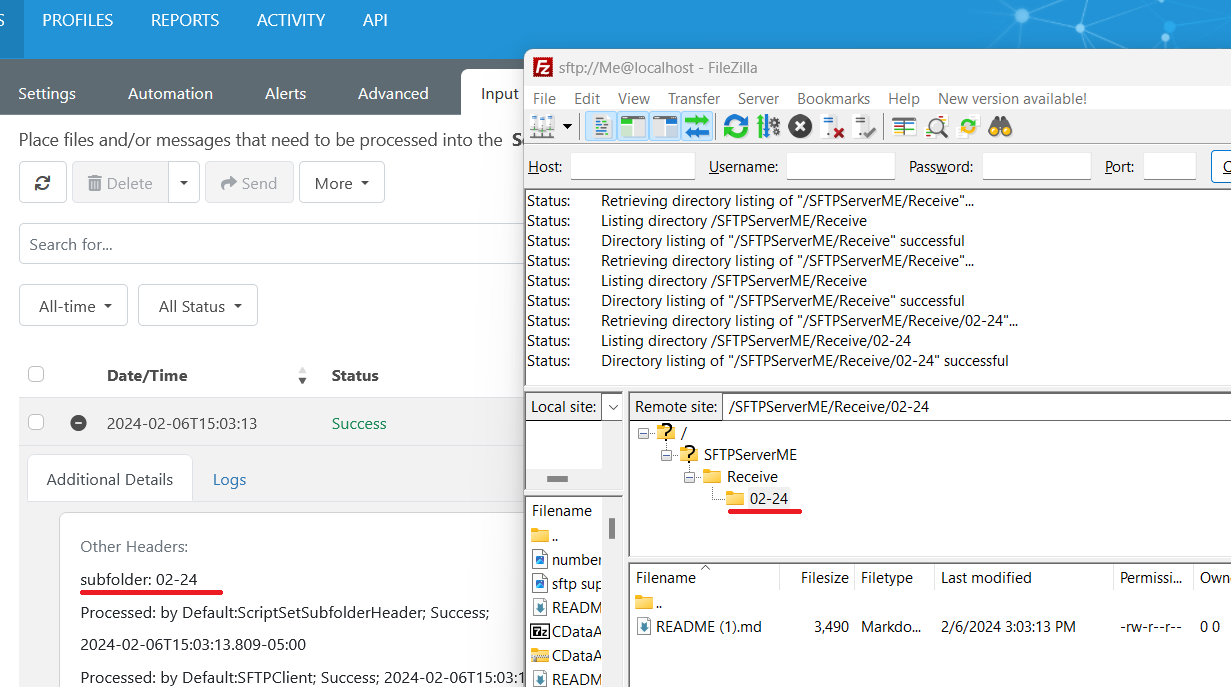

A script is a common way to set a header on a message in Arc. Below is a bit of code being used in a Script connector prior to the SFTP client connector above. This script sets the Subfolder header with a dynamically generated value of the current month and year and passes the file to the output of the Script connector. Since the SFTP client is next in the flow, it will use the subfolder header value to upload the files specifically to a subfolder with the appropriate Month-Year name under the SFTP server's remote path that we are uploading to.

<arc:set attr="output.header:subfolder" value="[now(MM-yy)]" />

<arc:set attr="output.filepath" value="[filepath]" />

<arc:push item="output" />

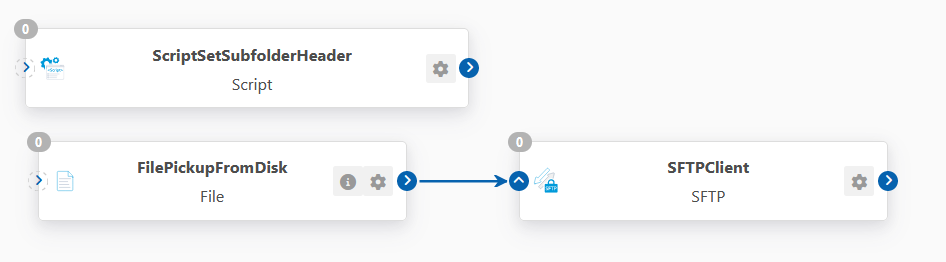

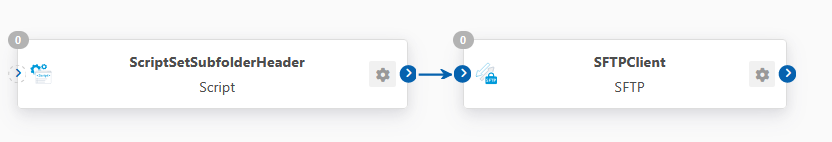

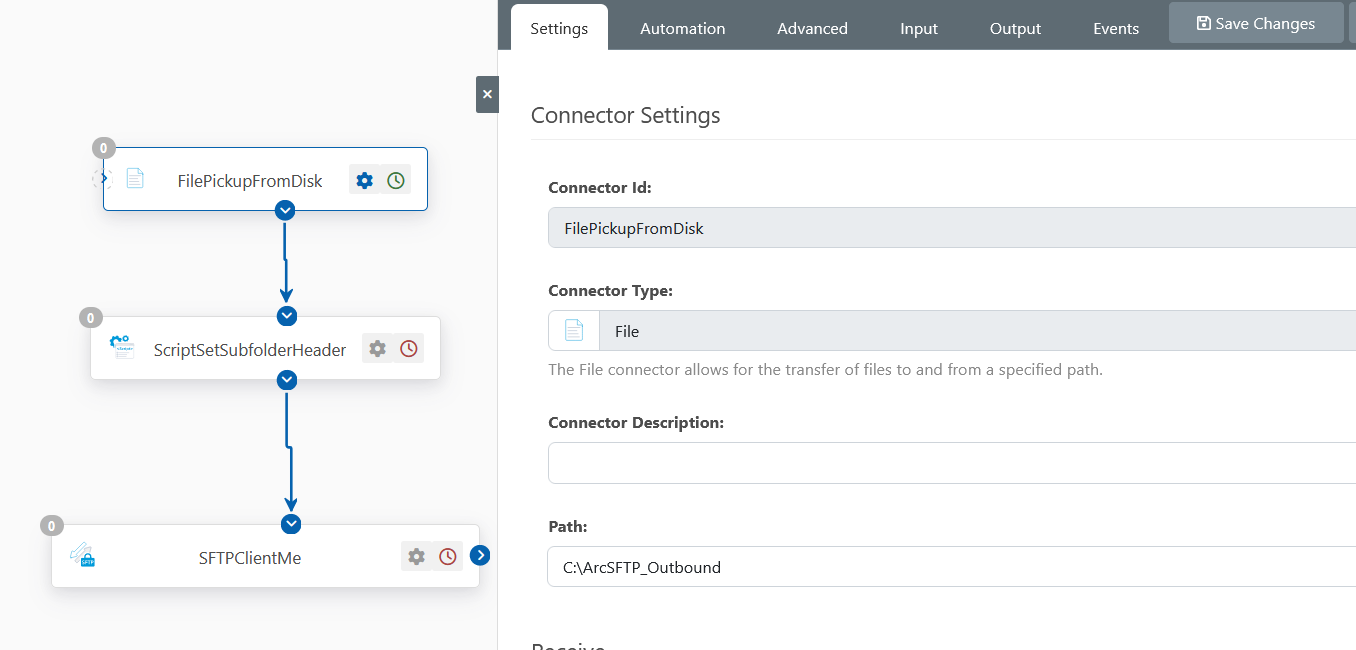

Below is an example flow using the subfolder header with an SFTP client connector. In this flow a File Connector is initially picking up the files from a directory on disk, then a script connector is setting the Subfolder header value on them, and finally the SFTP client is uploading the file to an SFTP server.

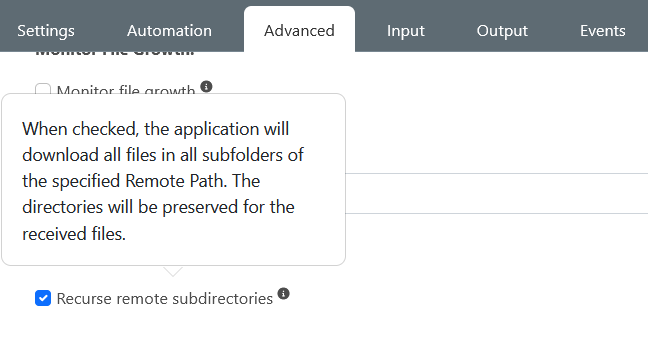

Conversely, if you are looking to download all files in the subfolders of a directory you are downloading/receiving from, you can use the Recurse Remote Subdirectories setting under the Advanced tab of supported connectors (such as the SFTP client/File Connectors):

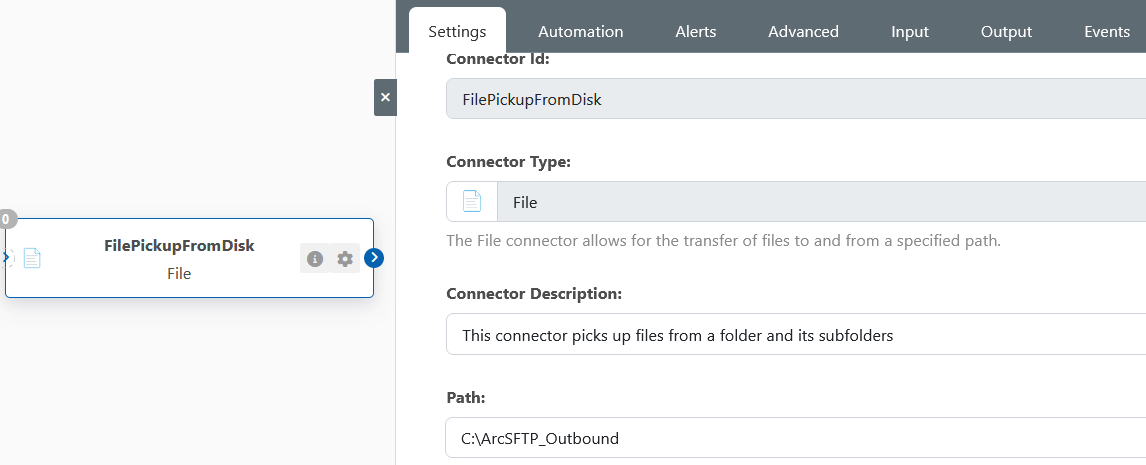

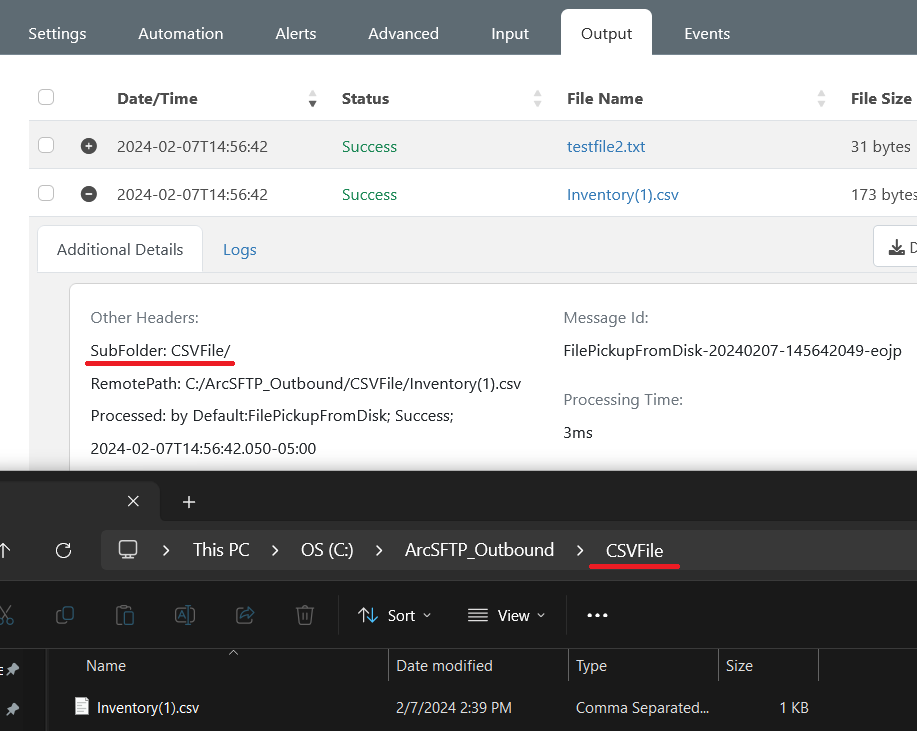

The Recurse Remote Subdirectories setting will allow the download of the files from the subdirectories of the parent path you are downloading or receiving from. Any files picked up from the subdirectories will automatically have a subfolder header added to their metadata letting you keep track of which subdirectory these files were picked up from. For example, this can be seen when picking up files from subfolders using a File Connector, note that the subfolder header that will be automatically generated will be relative to the path that you are downloading or receiving from.

As an example of a use case for the automatically generated subfolder header, you could use this to sync the contents of a directory and its subdirectories from one source to another. Building upon the earlier flow, instead of manually setting the subfolder in a script for the SFTP server, one could use this Recurse Remote Subdirectories setting in the File Connector to pick up files from subfolders in the directory my File connector is receiving from. When these files are picked up from the subfolders, the files will have the subfolder header value set of the subfolder from which they came, and this will then be carried on to a subfolder that will be created on the SFTP server once the file is sent through the SFTP client connector.